High performance computing

Photos by Evan Krape, Kathy F. Atkinson January 15, 2019

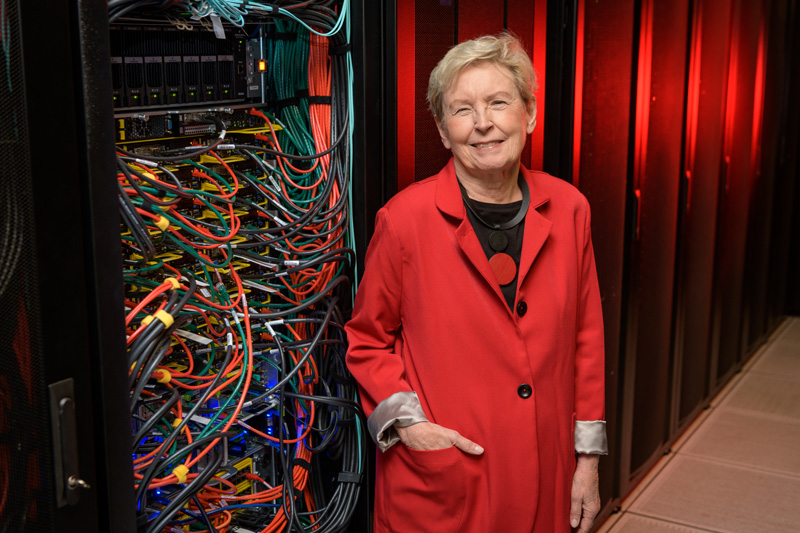

New HPC cluster named for former UD IT director Jane Caviness

The University of Delaware’s newest high performance computing (HPC) community cluster, named Caviness, puts vast computational power at researchers’ disposal.

Named for Jane Caviness, a former UD employee and leader of a ground-breaking expansion of UD’s computing resources and network infrastructure, the Caviness cluster packs twice the computational power of previous community clusters Mills and Farber in just one quarter of the floorspace in a data center.

Caviness, and potentially all of UD’s HPC clusters, will benefit from the forthcoming upgrades to UD’s network, as described in UDaily in December. That means researchers will have access to new high-bandwidth capabilities which will provide increased speeds in downloading and uploading data.

Constructed by UD’s Information Technology (UDIT) department, Caviness’s powerful design offers a more robust computing environment to UD researchers.

“We are excited by the rapid subscription to the Caviness cluster by research faculty and will be seeking to collaborate with research faculty to design HPC capabilities that broadly support their increasingly diverse computational needs,” said Sharon Pitt, UD vice president and CIO of Information Technologies.

Researchers who invest in a HPC cluster can leverage the cluster to rapidly solve difficult computational problems, using a combination of processing and storage. For example, Josh Neunuebel, assistant professor of psychological and brain sciences, uses the Farber HPC cluster to analyze terabytes of audio and video data of mice to illuminate the impact communication has on the development of mouse social behavior.

To design Caviness, UD IT’s Research Computing group consulted with faculty who rely on HPC to support their research projects. Over the course of two years, the Research Computing group gathered input from faculty like Sunita Chandrasekaran, assistant professor in the Department of Computer and Information Sciences. Her expertise in Graphics Processing Units (GPUs) was instrumental in the selection of GPUs for the cluster.

“These GPUs enable new possibilities and scientific advances in the area of high performance computing and deep learning,” Chandrasekaran said, referring to a branch of artificial intelligence. “The Caviness cluster is a very timely installation as most of the computing systems in research centers across the world are increasingly using CPUs and accelerators, most often being GPUs.”

GPUs are very efficient at manipulating computer graphics and image processing, and allow researchers to quickly process large blocks of data. The level of performance of the GPUs in Caviness was once spread across entire clusters, and now is delivered in something about the size of a quart of milk.

That kind of performance isn’t just limited to the new GPUs. Stavros Cartzoulas, associate director of computational chemistry, UD Energy Institute, and his research group can attest to the increased performance of the entire cluster.

"Some of the students and postdocs in our group were given early access to test Caviness,” Cartzoulas said. “They reported back run times reduced by at least a factor of two. This is a big deal to them — and their advisors."

UD IT also collaborated with Penguin Computing on the configuration of the HPC servers to improve the overall footprint and lifespan of the cluster.

Paul Gallagher, southeast sales manager for Penguin, said, “The actual footprint for the data center is less than traditional methods. This allows for a more economical, and environmentally friendly solution that is supported for many years.”

Special consideration was given to each component to ensure a lifespan that extends beyond UD's previous community clusters. Unlike with Mills and Farber, Caviness can be upgraded with hardware as needed in support of UD’s growing research computing community. This design, which allows UDIT to keep the cluster online and available to researchers for 8 to 10 years, is appealing to research groups desiring continuity for graduate students.

Having a local HPC resource like Caviness, especially one that can be expanded in this way, is extremely valuable for researchers.

“My research involves significant computational processes that can only be performed on HPC resources. I also share resources with collaborators at other institutions, so having Caviness on campus will be great for the lab,” Curtis Johnson, assistant professor of biomedical engineering, and a first time investor in the community cluster program, said. “Before, we were limited by computation time for large problems and Caviness will allow us to break this barrier and advance the science in the lab.”

The community cluster program itself, which started at UD with the release of the Mills cluster in 2012, provides a wonderful example of how IT supports researchers. Community clusters work on a 50/50 sharing model, in which 50% of the investment is from the faculty and 50% from IT. Often, researchers will invest their grant money in a few nodes on a community cluster in order to provide a proof-of-concept that will, in turn, allow them to get more funding for their research.

“I think that this cluster gives researchers the chance to share an expensive resource and have support on that resource, like technical support and customer support, and keeping it up to date,” said Jane Caviness, former UD employee and the cluster’s namesake.

Caviness, who is no stranger to the computing world, understands the importance of having technical support on a resource like a computing cluster.

“With the support that the research computing group provides, researchers can do their research and not have to worry about the overhead of learning such a facility,” Jane Caviness said.

Caviness was selected as the name for the newest cluster through a voting process that involved faculty and staff across UD.

“It is great to have our new cluster be named after Jane Caviness, who has played a very important role in establishing high performance computing at University of Delaware,” said Arthi Jayaraman, an investor in both the Farber and Caviness clusters and associate professor of chemical and biomolecular engineering and materials science. “Through her achievements in the computing field, both at UD and the National Science Foundation, she is a fantastic role model for all students at UD who aspire to be impactful computer scientists. Additionally, naming this cluster after an outstanding female computer scientist serves as a good reminder to all students that there are outstanding female role models in the field of computing, where most popular known leaders have been men.”

For Caviness, being selected as the namesake for this cluster indicates a growing appreciation for the non-technical aspects of computing.

“The two cluster namesakes before me were real pioneers in the networking field and so I’m particularly honored to be included with Dave Mills and Dave Farber, who are real technical pioneers, and I appreciate that it’s named for me, and I hope that it recognizes some of the non-technical aspects of networking,” Caviness said.

Although the cluster was released to researchers in early October, the Research Computing group is already looking for investors in the second expansion to Caviness.

Individual research groups are not the only ones that can invest in Caviness. Departments, centers and institutes can also invest so they can share the cluster’s resources as they see fit. Those who are interested in buying into the second expansion should review the technical specifications and submit an Cluster Interest form.

Jane Caviness

Jane Caviness is a former director of Academic Computing Services at UD. In the 1980s, Caviness led a ground-breaking expansion of UD’s computing resources and network infrastructure that laid the foundation for UD’s current research computing capabilities. After leaving UD, Caviness went to the National Science Foundation (NSF) as program director for the NSF Network (NSFNET) in the newly formed Division of Networking and Communications Research and Infrastructure, later serving as deputy division director. She oversaw the implementation of the NSFNET’s initial backbone and the expansion of network connectivity between colleges, universities, NSF supercomputer centers, and other research centers. Caviness’ activity in the Association for Computing Machinery (ACM) and EDUCOM, including a term as vice-president for Networking at EDUCOM, highlight how strong an advocate she has been for cooperation and collaboration in the research computing community.

Contact Us

Have a UDaily story idea?

Contact us at ocm@udel.edu

Members of the press

Contact us at mediarelations@udel.edu or visit the Media Relations website