Harnessing the power of the world’s fastest computer

Photos by Evan Krape and courtesy of the U.S. Department of Energy | Illustration by Jeffrey C. Chase October 18, 2022

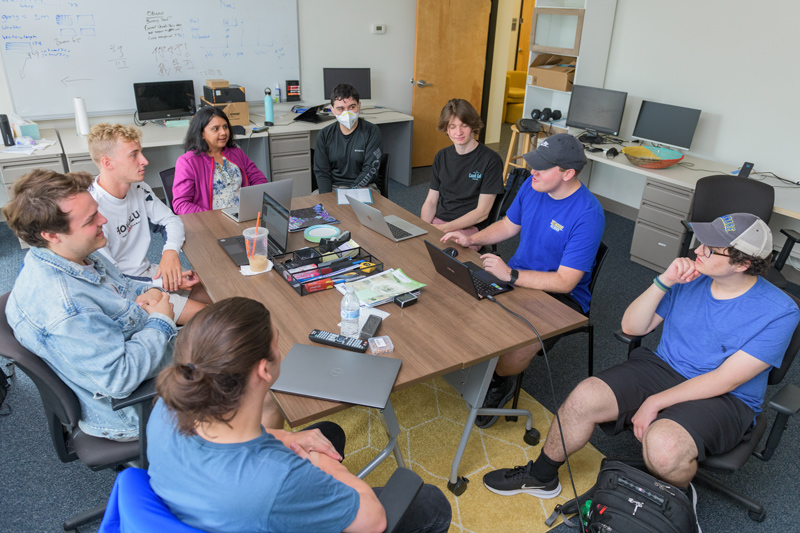

UD Prof. Sunita Chandrasekaran, students play key roles in exascale computing

From fast food to rapid COVID tests, the world has an unrelenting “need for speed.”

The fastest drive-thru in the U.S. this year, with the shortest average service time from placing your order to getting your food, was Taco Bell at 221.99 seconds.

The fastest car, the Bugatti Chiron Super Sport 300+, sped into the record books at 304.7 miles per hour in 2019 and, as of this writing, still holds the title.

And then there is Frontier, the supercomputer at the U.S. Department of Energy’s Oak Ridge National Lab in Oak Ridge, Tennessee. In May 2022, it was named the fastest computer in the world, clocking in at 1.1 exaflops, which is more than a quintillion calculations per second. That’s a whole lot of math problems to solve — more than 1,000,000,000,000,000,000 of them — in the blink of an eye, a feat that earned Frontier the coveted status as the first computer to achieve exascale computing power.

Scientists are eager to harness Frontier for a broad range of studies, from mapping the brain to creating more realistic climate models, exploring fusion energy, improving our understanding of new materials at the nanoscience level, bolstering national security, and achieving a clearer, deeper view of the universe, from particle physics to star formation. And that’s barely scratching the surface.

At the University of Delaware, Sunita Chandrasekaran, associate professor and David L. and Beverly J.C. Mills Career Development Chair in the Department of Computer and Information Sciences, and her students have been working to ensure that key software will be ready to run on Frontier when the exascale computer is “open for business” to the scientific community in 2023.

Because existing computer codes don’t automatically port over to exascale, she has worked with a team of researchers in the U.S. and at HZDR in Germany to stress-test a workhorse computer application called “Particle in Cell” (PIConGPU).

A key tool in plasma physics, the Particle-in-Cell algorithm describes the dynamics of a plasma — matter rich in charged particles (ions and electrons) — by computing the motion of these charged particles based on Maxwell’s equations. (James Maxwell was a 19th-century physicist best known for using four equations to describe electromagnetic theory. Albert Einstein said Maxwell’s impact on physics was the most profound since Sir Issac Newton.) Such tools are critical to evolving radiation therapies for cancer, as well as expanding the use of X-rays to probe the structure of materials.

“I tell my students, imagine your laptop connected to millions of other laptops and being able to harness all of that power,” Chandrasekaran said. “But then in comes exascale — that’s a 1 followed by 18 zeros. Think about how big and powerful such a massive system can be. Such a system could potentially light up an entire city.”

Executing instructions on an exascale system requires a “different programming framework” from other systems, Chandrasekaran explained, given the unique architectural design consisting of many parallel processing units and unique high-performance graphics processing units.

Overall, Frontier contains 9,408 central processing units (CPUs), 37,632 graphics processing units (GPUs) and 8,730,112 cores, all connected by more than 90 miles of networking cables. All of this computing power helped Frontier leap the exascale barrier, and Chandrasekaran is working to ensure that the software will make the leap, too.

To take advantage of the system’s specialized architecture, she and her fellow researchers are working to make sure the computer code in high-priority software is literally up to Frontier’s speed — and that it’s bug-free — some of the key components of the Exascale Computing Project SOLLVE, which Chandrasekaran now leads. It is a collaboration of Brookhaven National Laboratory, Argonne National Laboratory, Oak Ridge National Laboratory, Lawrence Livermore National Laboratory, Georgia Tech and UD.

“Our team has been working together since 2017 to stress-test the software to improve the system,” Chandrasekaran said, noting that the work involves collaborations with several compiler developers that provide implementations for Frontier.

“The machine is so new that the tools we need for operating it are also immature,” Chandrasekaran said. “Our goal is to have programs ready for scientists to use. We assist by filing bugs, offering fixes, testing beta versions, and helping vendors prepare robust tools for the scientists to use.”

UD students de-bug vital programming tools

Thomas Huber, who earned his bachelor’s degree at UD, worked on the project with Chandrasekaran for more than two years before graduating with his master of science in computer and information sciences from the University this past May. A native of Linwood, New Jersey, he is now employed as a software engineer at Cornelis Networks, a computer hardware company.

“When we started working on this a few years ago, we knew we had Frontier coming at exascale speed, and that required getting a ton of people together to work on the 20 or so core applications that had been deemed mission critical,” Huber said. “All of this software needs to run flawlessly.”

Thanks to this unique opportunity that Chandrasekaran made possible, Huber gained valuable research and real-world experience. He also trained four undergrads on the project, as they worked together to validate that OpenMP, a popular programming tool, could run on Frontier.

As the group’s work progressed in assessing the compilers that provide implementations for novel programming features, they found a few bugs, and then a few more bugs. And that’s when they decided to start a GitHub — a software developer forum — to share their findings and open source code, as part of ECP–SOLLVE.

“We started a GitHub to review the OpenMP specification releases. They come out every few years, and they are like new features — 600 pages of what you can and can’t do,” Huber said. “Most importantly, the section at the end states all the differences among the versions of the program. We take the list of all the new features and go through and create test cases for all of them. We write code that no one else has written before, and we make all of our code public.”

Huber estimates that the UD team, in collaboration with Oak Ridge National Lab, has written 500 or so tests, and 50,000 lines of code, so far.

“The whole thing with high-performance computing is parallel programming,” Huber said. “Imagine you’re in a ton of traffic heading to a toll booth with only one EZ pass lane. Parallel programming allows you to split into many EZ pass lanes. OpenMP allows you to do that parallel work and run extremely fast. What we’ve done with OpenMP ensures that scientists and others will be able to use the program on Frontier. We’re the guinea pigs for it.”

Huber was attracted to the research through the Vertically Integrated Program (VIP) in the College of Engineering. Chandrasekaran was the group leader for the project. He stuck around for a semester, got to work on a research paper (“That was amazing,” he said) and met colleagues who became best friends. They even won a poster competition.

He credits Chandrasekaran for engaging him in the field.

“Being so enthusiastic and emphasizing how important this stuff is to helping researchers, and the real world, she made the difference,” Huber said. “She’s a top-tier professor in high-performance computing.”

Contact Us

Have a UDaily story idea?

Contact us at ocm@udel.edu

Members of the press

Contact us at 302-831-NEWS or visit the Media Relations website