Coming soon: More computing muscle

July 03, 2017

Next community cluster to expand computational capabilities, increase operating life span

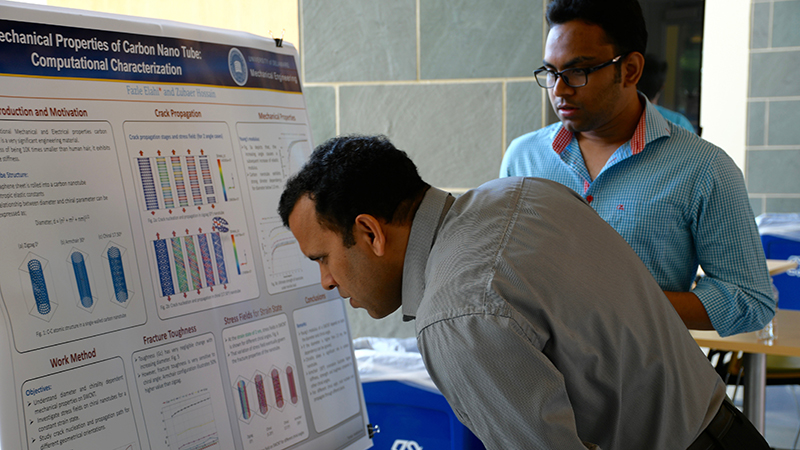

An enormous amount of today's research draws on high-performance computing (HPC) -- for mathematical calculations, data collection and analysis and all sorts of computational modeling -- and a small but wonderfully diverse sample of that was on display Wednesday, June 28, at the University of Delaware's inaugural HPC Symposium poster session.

The session, hosted by UD's Information Technologies Research Computing group, showed details of studies ranging from cellular mechanics in the virus that causes AIDS to finding ways to reuse heat, understanding why some materials fracture, molecular modeling of composite materials and ballistic fibers, and looking at new ways of engineering thin films for solar cells, to name just a few.

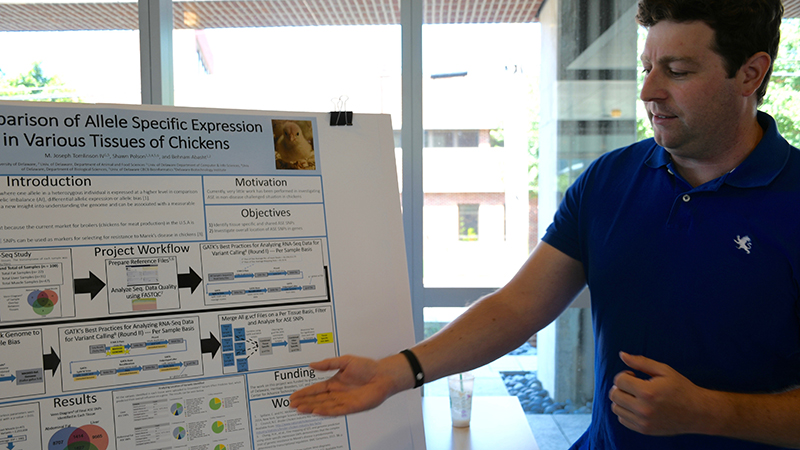

"The datasets are so large in bioinformatics that you are dependent on HPC," said M. Joseph Tomlinson IV, who is working on a master's degree in bioinformatics to add to his doctorate in biochemistry and molecular genetics. "Everything is driven by big data."

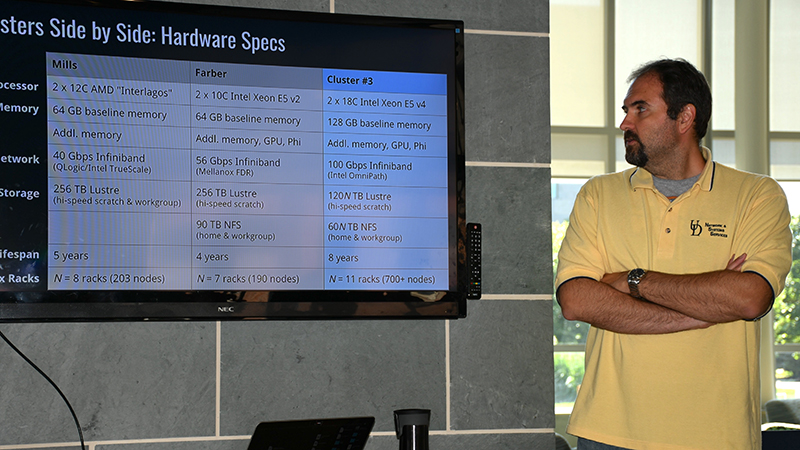

Now UD is preparing to deploy its third research computing community cluster, an effort that began in 2012 with the system known as Mills and continued in 2014 with a system known as Farber. Each cluster is designed to leverage state-of-the-art technologies.

"We're focusing on increasing not just the capacity and capability but also the efficiency of this system," said Jeffrey Frey, a systems programmer on UD's HPC team as he gave attendees an overview of IT's strategy.

That strategy includes doubling the speed of the network linking the components of the cluster and increasing the processor count by more than 200 percent. But all of that additional capacity requires only 8 percent more floor space, Frey said.

It's great news for UD's research community.

"Right now, how far we can go is only limited by resources," said Raja Ganesh, a doctoral student in mechanical engineering who works on multiscale modeling at the Center for Composite Materials. "With more resources, we increase the domain of problems we can look at. At the same time, the more realistic you want your model, the more resources it takes."

Buying into a community-wide computing cluster offers researchers a cost-effective way to gain high-power computation at discounted rates, with maintenance, support and administrative duties covered by UD's Information Technologies team.

The kind of research doctoral student Chaoyi Xu is doing would not be possible without those resources. He uses HPC for calculations needed in the study of free energy and nucleotide movement within the cells of the HIV virus.

"We simulate every step," said Xu, who is working with new faculty member Juan Perilla in the Department of Chemistry and Biochemistry. "We can literally see how the nucleotide goes through the hexamer."

It's nanoscale analysis with complex demands. Ten nanoseconds covers about 500,000 steps, he said, and such analysis can take 2 1/2 days of computation.

The time and resources required to run jobs on the clusters varies widely, Frey said, and satisfying that diversity while maintaining a viable tool over an eight-year period is the major challenge IT staff have faced while designing the next community cluster.

The design, encompassing many innovations and improvements relative to Mills and Farber clusters, has met with strong positive feedback from faculty and staff.

It opens up many new possibilities.

"The more resources you have to run data, the better off you are," Tomlinson said. "I don't know any field here that won't need computational resources."

About UD’s HPC clusters

Mills -- Named for David L. Mills, an Internet pioneer and UD professor emeritus in the Department of Electrical and Computer Engineering, the Mills cluster was UD's first and met its five-year life span in January of this year. It has 203 compute nodes, 5,160 cores and a high-speed network operating at 40 gigabits per second.

Farber -- Named for David Farber, an Internet pioneer and UD professor and distinguished policy fellow in the Department of Electrical and Computer Engineering, the Farber cluster was UD's second cluster and will reach the end of its life cycle late next year. It has 190 compute nodes, 3,800 cores and a high-speed network operating at 56 gigabits per second.

Cluster 3 -- Not yet named. It will have the capacity to grow to more than 700 nodes (20,000 cores by current specifications) over its lifespan of eight years, with a high-speed network initially operating at 100 gigabits per second. A predominantly reusable infrastructure will allow for lower-cost upgrade of nodes as they age. New investment options and changes to job scheduling are expected to increase the utility of this cluster over its predecessors.

Contact Us

Have a UDaily story idea?

Contact us at ocm@udel.edu

Members of the press

Contact us at mediarelations@udel.edu or visit the Media Relations website