Exploring what lies beneath

Photos by Evan Krape August 31, 2016

Video by Ashley Barnas

Robotic fleet captures trove of Broadkill data in mapping bootcamp

With tropical storms starting to rev up in the Atlantic Ocean, the timing of the University of Delaware's Autonomous Systems Bootcamp was ideal and experts from UD and around the world converged at the Hugh R. Sharp Campus in Lewes to make the most of it.

The mission of the weeklong deployment was straightforward: Develop a detailed, baseline profile of a 5-square-kilometer area of Broadkill Beach, focusing on a recent U.S. Army Corps of Engineers dredging and replenishment program where scientists could see – in close proximity – both a natural beach and a replenished beach.

The area had not been surveyed by the National Oceanic and Atmospheric Administration (NOAA) for more than 40 years, according to UD oceanographer Art Trembanis, and this work – with so many skilled hands on duty – would accomplish in one week what it might otherwise take months or years to do.

Capturing such a profile just before a major storm is a great plus from a researcher's perspective. That fresh data showing how conditions were before the storm offers many opportunities to learn how storms affect coastlines and underwater landscapes after it.

In addition to that scientific trove, the convergence of expertise at the bootcamp offered each participant a practical demonstration of the technology available and how layers of data can be correlated and used to analyze conditions and build better plans.

CONVERGING IN LEWES

Evan Krape

UD oceanographer Art Trembanis (second from left) watches the work with the EcoMapper team - Luis Rodriguez, Andrew Keppel and Tor Inge Loenmo.

Evan Krape

The Gavia is lowered from the R/V Daiber into the water to start its work.

The Teledyne Oceanscience Z-Boat is an autonomous surface vehicle that uses sonar and radio communication to survey areas that are difficult or unsafe to reach. Photo: Beth Miller

Evan Krape

The EcoMapper heads offshore to a nearby coordinate, where it will submerge and begin its underwater mission, which is done about one meter from the seafloor.

Bootcamp participants gather with their robots for a farewell aerial shot, photo by doctoral student/drone pilot Stephanie Dohner.

Evan Krape

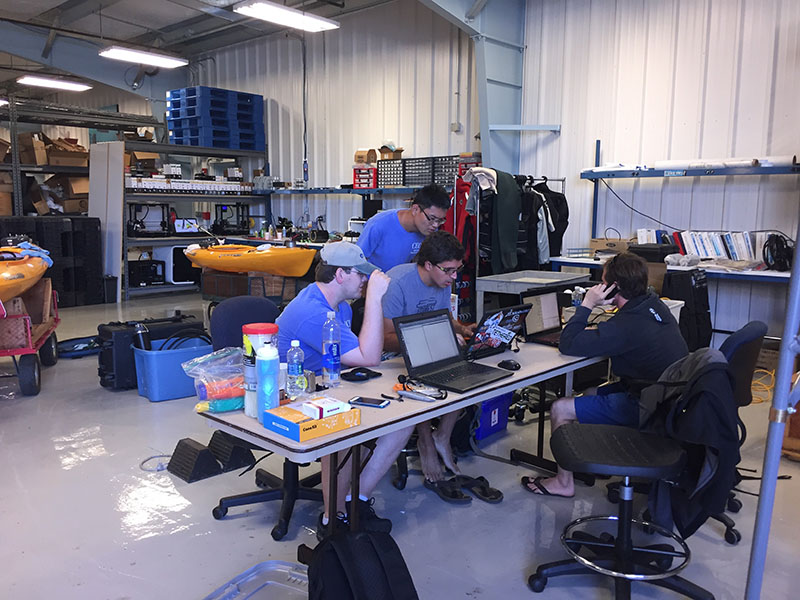

Researchers plot and track each segment of the EcoMapper's mission with mapping software.

Evan Krape

The sight of the unmanned kayaks - each outfitted with a variety of sensors - sometimes is unnerving to beachgoers, who assume some humans have gone missing.Photo: Beth Miller

Evan Krape

Andrew Keppel of the U.S. Naval Academy (right) checks the map before launching an EcoMapper autonomous underwater vehicle at Broadkill Beach.

Evan Krape

The R/V Dogfish pontoon hauls two unmanned kayaks to the spot where they will begin data collection.

Evan Krape

Carter DuVal, a UD doctoral student in oceanography, checks the map as the R/V Daiber heads toward Broadkill Beach, where the Gavia autonomous underwater vehicle (on deck) will be launched.

Evan Krape

Luis Rodriguez of the U.S. Naval Academy and Tor Inge Loenmo of Norway carry the 85-pound EcoMapper into the water.

As information is gathered, researchers gather in the Robotics Discovery Lab to see what they've got. Photo: Beth Miller

With UD's new Robotics Discovery Lab (RDL) as the home base, students and scientists from nine universities, the U.S. Naval Academy and several tech firms fanned out in four teams each day to gather images and other data that would be used to analyze and map the area, including several waterways near the beach.

At their disposal were a fleet of robotic devices for surface, underwater and aerial surveys, two research vessels, several kayaks, assorted cameras and sonar sensors and lots of other gear.

For hours each day, there were drones in the air, autonomous kayaks moving up and down the shoreline, and torpedo-like underwater devices measuring conditions about a meter from the bottom of the seafloor.

More than 50 students, professors, researchers and technical experts participated in the event, including some from Australia, China, Norway, Italy, Spain, California, and New England. There were marine and environmental scientists, engineers, biologists, geologists, grad students and undergrad students.

It was the fifth such bootcamp, the first hosted by UD's College of Earth, Ocean, and Environment, where the RDL was developed by Trembanis (oceanography), and Mark Moline and Matt Oliver (both of marine science and policy).

Abundant harvest of information

And the concentration of brainpower and technology produced an abundant harvest.

"We came away with a whole host of great new mapping data and learned that the diverse coastal environments of Delaware Bay present meaningful challenges for robotic approaches to environmental mapping," Trembanis said. "New collaborations and several new research proposals are already in the works as is a manuscript highlighting the effort."

Analysis of the enormous amount of data captured by the teams is underway and a new map and three-dimensional model are under construction. Soon, they will be available to the public.

"We discovered that the offshore area immediately adjacent to Broadkill Beach is surprisingly diverse in terms of seafloor texture and shape and that shallow-water mapping is very challenging but can be meaningfully addressed by the application of autonomous platforms ranging from aerial drones to autonomous kayaks to underwater vehicles," Trembanis said.

Alex Forrest of the University of California-Davis studies environmental fluid mechanisms, especially in deepwater lakes. He uses underwater instruments to measure troughs, features, the hardness of materials.

He and a student from his lab, Supun Randeni, were among those aboard the research vessel (R/V) Joanne Daiber, from which a Gavia autonomous underwater vehicle would be launched. The instrument can measure the seafloor, dissolved oxygen, temperature, salinity, turbidity and chlorophyll, among other things.

Regular cameras would not be too useful in these waters, Forrest said, where visibility was probably a meter at most. In Lake Tahoe, it's more like 30 meters.

"The turbid waters of our coast means we don't know what lies beneath," Trembanis said. "Autonomous systems open up many platforms.... And you can take risks that you can't take with people."

‘Mowing the lawn’

The robotics also relieve humans of the monotony of hours and hours of observation. They move in programmed grid formation – "mowing the lawn," Trembanis calls it – to reach every portion of the survey area, including the marsh, the dunes and the offshore areas.

Forrest's student had opportunity to test a method he had developed to measure the influence currents have on the autonomous devices, a method Trembanis said proved immediately helpful.

"The data has proved useful as it is now allowing us to generate new measurements from our previous missions of what the currents were like in the bay that moved the AUVs around as they swam under water," he said. "This is a great example of how the opportunity of bootcamp allows us to leverage the collective expertise of our attendees and enhance the mapping effort."

On the beach, biologist Andrew Keppel and Luis Rodriguez of the Naval Academy were launching an EcoMapper – another autonomous underwater instrument – that uses side-scan sonar to explore the seafloor and characterize conditions and objects there. They worked with Tor Inge Loenmo of Oslo, Norway, who now is an exchange student at the University of New Hampshire.

Watching these instruments at work isn't the thrilling part of this mission. More often than not, they are underwater or a long way off.

"The only thing more exciting than seeing sub-sea robots work is watching grass grow," Trembanis said with a grin.

What is truly fascinating is seeing what those robots bring home – layers of data in unprecedented detail – and then using that new information to improve future response to storms and prevention of damage.

Stephanie Dohner of Englewood, Ohio, a first-year doctoral student in Trembanis' lab, is working on predictive models that draw from the collected data.

"I am learning very quickly how incredibly fun it is to be in a marsh and the next day be on the beach, and that I can incorporate kayaking into my research – always a great day," she said. "Part of the project is creating a monitoring system for those who don't do this all the time, for local municipalities to take control of their area and be able to document it."

Mark Ryan and his son, Kyle, of Lewes-based Ryan Media brought tethered drones to document the work and demonstrate the capacities their equipment could offer.

"This is world class what's happening here," Mark Ryan said of the bootcamp. "Art is out front of everybody that we're aware of. He's a visionary and our industry needs one. He's masterful in choreographing the art and science of what is required for this. The science is obvious, but the art is in assembling a team that is going to integrate and be inclusive and produce the holistic results."

And make plans for the future, wherever it may lead.

"We learned and witnessed that many exciting opportunities exist for students to engage and discover project and career challenges in the field of environmental robotics and highlighted the unique capacity of the UD Robotics Discovery Lab in being able to host and facilitate a unique workshop like this," Trembanis said.

Used at bootcamp

Vessels and technology in use throughout the week included:

• Research vessels Daiber and Dogfish;

• Baysport boat;

• Teledyne Z-Boat;

• Scout kayaks (3);

• Autonomous underwater vehicles: Gavia, EcoMapper, REMUS and Slocum Glider;

• ATOM 4000 tethered drone;

• Unmanned aerial systems and unmanned aerial vehicles: DJI Phantom 3, SenseFly eBee, InfiniteJib/HyPack Nexus 800;

• RGB (red green blue) camera;

• NIR (near infrared) camera; and

• LiDAR (Light Detection and Ranging) equipment.

Contact Us

Have a UDaily story idea?

Contact us at ocm@udel.edu

Members of the press

Contact us at mediarelations@udel.edu or visit the Media Relations website