Taking 'multi-core' mainstream

UD professor works to overcome challenges in harnessing power of multicore computer processors

11:34 a.m., Jan. 14, 2013--Computer processors that can complete multiple tasks simultaneously have been available in the mainstream for almost a decade. In fact, almost all processors developed today are multicore processors. Yet, computer programmers still struggle to efficiently harness their power because it is difficult to write correct and efficient parallel code.

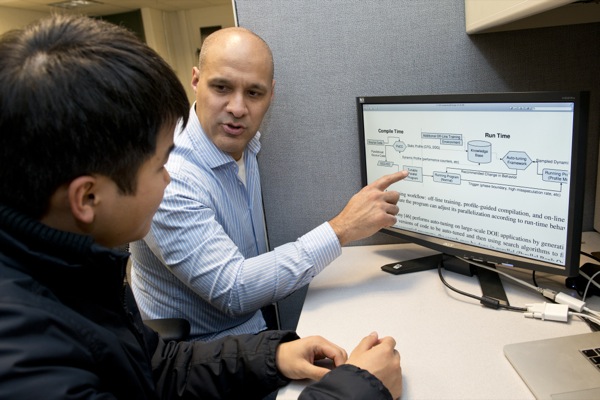

According to the University of Delaware’s John Cavazos, to effectively exploit the power of multi-core processors, programs must be structured as a collection of independent tasks where separate tasks are executed on independent cores.

Research Stories

Chronic wounds

Prof. Heck's legacy

The complexity of modern software, however, makes this difficult.

Now Cavazos, assistant professor of computer and information sciences, is attempting to invent new algorithms and tools for parallelization of large-scale programs as principal investigator (PI) of a new National Science Foundation (NSF) grant.

The work, funded through a $497,791 grant from the Division of Computer and Communication Foundations, is a collaborative effort with Michael Spear, assistant professor of computer science and engineering at Lehigh University. It involves using a novel combination of automatic and profile-driven techniques to address fundamental issues in creating parallel programs.

Compilers translate applications written by software developers into machine code that executes on a computer. One of their important tasks is to help applications to run more efficiently through parallelization. However, while a variety of parallelization techniques are available, such as automatic parallelization and speculative parallelization, no one technique is applicable to all programs.

Cavazos will use machine learning to create a system that enables a compiler to analyze a program, assess which parallelization technique is suitable, and then automatically apply that technique to the program. He is also investigating how to enable the program, through learning, to adapt its behavior based on outside inputs and environments.

The project extends research he began in 2010, when he received an NSF Faculty Early Career Development Award to develop adaptive compilers for multi-core computer environments. It also complements his recent work with the Defense Advanced Research Projects Agency to construct an extreme-scale software framework capable of automatically partitioning and mapping application code to a multi-core system and generating “SMART” applications that can reconfigure underlying hardware to save power.

“This research will enable a greater percentage of programs to benefit from multicore processors by providing feedback to programmers so that they can improve the code, and by integrating adaptability so that a broader range of programs can achieve increased speed,” Cavazos said.

As the project progresses, Cavazos plans to develop new algorithms and tools for speculative parallelization, a technique that allows shared-memory systems to execute certain loops in parallel; loops that a compiler cannot otherwise determine as parallelizable. Ultimately, he hopes to distribute the new prototypes and source codes as open-source software.

A key educational initiative includes training graduate students and integrating performance-related topics into his classroom instruction.

“Helping students to learn the fundamentals of creating parallel code that is both correct and efficient needs to be a major educational goal in our computer science departments,” said Cavazos.

Article by Karen B. Roberts

Photo by Lane McLaughlin